How To Download From Email For Offline Use

Although Wi-Fi is bachelor everywhere these days, you may find yourself without information technology from time to time. And when you do, there may be websites you wish you should've saved, so that you had access to them while offline—peradventure for inquiry, amusement, or just posterity.

It'south pretty basic to save individual spider web pages for offline reading, but what if you want to download an unabridged website? Don't worry, it's easier than you call back. But don't take our word for it. Here are several nifty tools you lot can employ to download any website for offline reading—without whatever hassles.

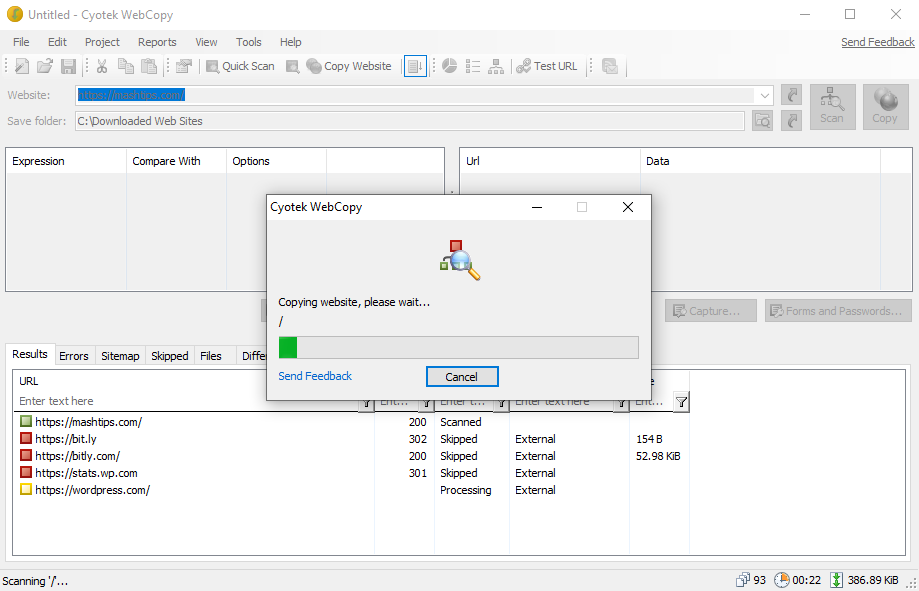

1. WebCopy

WebCopy by Cyotek takes a website URL and scans it for links, pages, and media. As it finds pages, it recursively looks for more links, pages, and media until the whole website is discovered. Then you tin use the configuration options to decide which parts to download offline.

The interesting thing about WebCopy is you tin can prepare up multiple projects that each accept their own settings and configurations. This makes it easy to re-download many sites whenever y'all want, each one in the aforementioned exact way every time.

Ane projection tin can re-create many websites, and then utilise them with an organized plan (e.g., a "Tech" project for copying tech sites).

How to Download an Unabridged Website With WebCopy

- Install and launch the app.

- Navigate to File > New to create a new projection.

- Type the URL into the Website field.

- Change the Save binder field to where you want the site saved.

- Play around with Project > Rules… (learn more about WebCopy Rules).

- Navigate to File > Save As… to save the project.

- Click Copy in the toolbar to start the process.

Once the copying is done, you lot tin use the Results tab to see the status of each individual page and/or media file. The Errors tab shows any issues that may have occurred, and the Skipped tab shows files that weren't downloaded.

But almost important is the Sitemap, which shows the full directory structure of the website equally discovered by WebCopy.

To view the website offline, open File Explorer and navigate to the salvage folder you designated. Open up the index.html (or sometimes alphabetize.htm) in your browser of selection to start browsing.

Download: WebCopy for Windows (Costless)

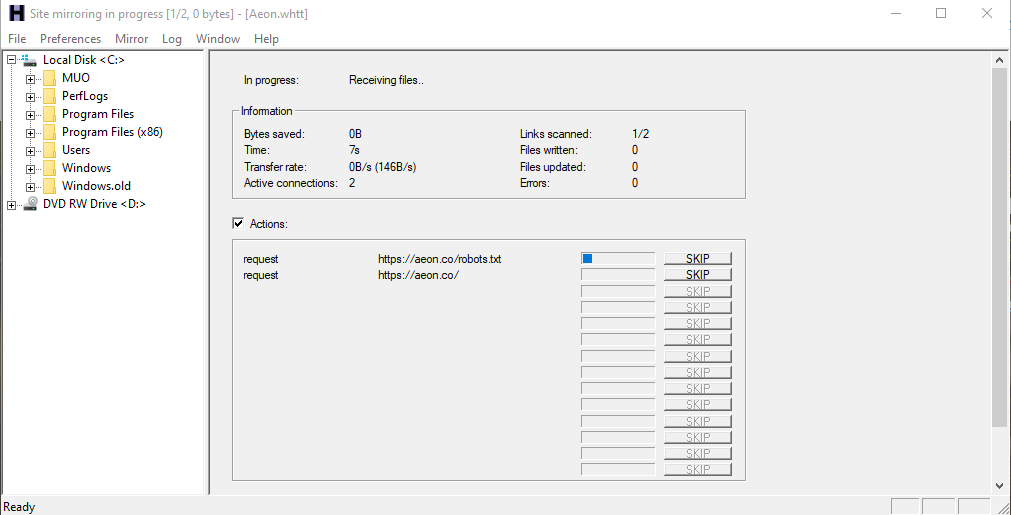

2. HTTrack

HTTrack is more known than WebCopy, and is arguably better considering it'due south open-source and bachelor on platforms other than Windows. The interface is a bit clunky and leaves much to be desired, still, it works well, and so don't permit that turn you away.

Like WebCopy, it uses a projection-based approach that lets you lot copy multiple websites and keep them all organized. You tin can pause and resume downloads, and yous tin update copied websites past re-downloading old and new files.

How to Download Complete Website With HTTrack

- Install and launch the app.

- Click Adjacent to begin creating a new project.

- Give the project a proper name, category, base of operations path, then click onAdjacent.

- Select Download website(s) for Activeness, then type each website's URL in the Web Addresses box, one URL per line. You lot tin can also store URLs in a TXT file and import it, which is convenient when you want to re-download the same sites afterwards. Click Side by side.

- Adapt parameters if you want, then click onEnd.

One time everything is downloaded, you can browse the site like normal by going to where the files were downloaded and opening the index.html or alphabetize.htm in a browser.

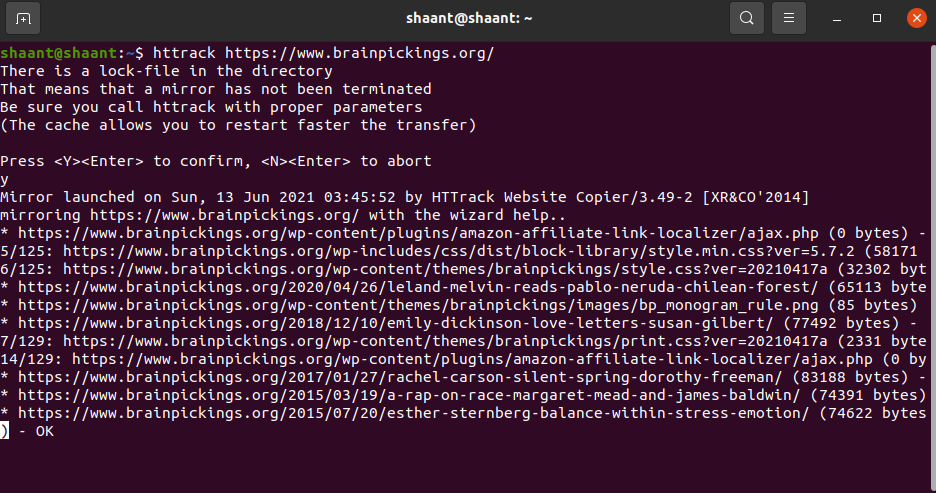

How to Use HTTrack With Linux

If yous are an Ubuntu user, here's how you tin apply HTTrack to save a whole website:

- Launch the Concluding and blazon the post-obit command:

sudo apt-get install httrack - It will ask for your Ubuntu password (if you've set up one). Type it in, and hit Enter. The Terminal volition download the tool in a few minutes.

- Finally, type in this command and hit Enter. For this example, we downloaded the pop website, Brain Pickings.

httrack https://world wide web.brainpickings.org/ - This volition download the whole website for offline reading.

You tin can replace the website URL here with the URL of whichever website you want to download. For case, if you wanted to download the whole Encyclopedia Britannica, you lot'll accept to tweak your command to this:

httrack https://www.britannica.com/ Download: HTTrack for Windows and Linux | Android (Free)

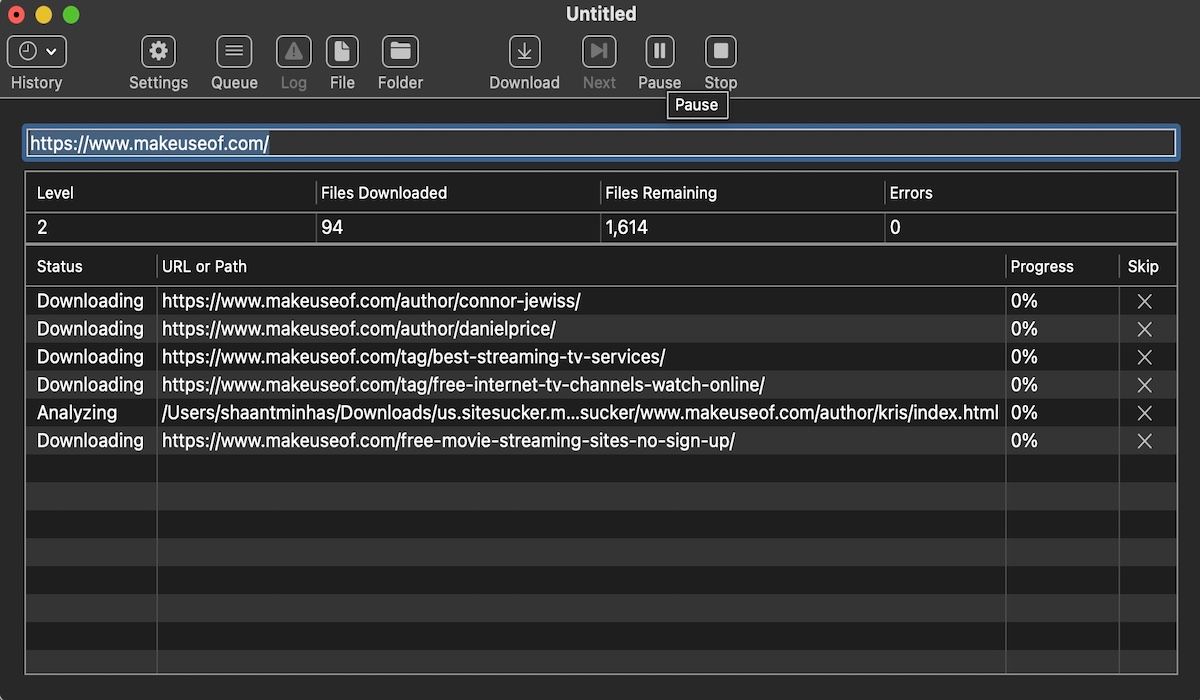

3. SiteSucker

If y'all're on a Mac, your best option is SiteSucker. This elementary tool copies entire websites, maintains the same structure, and includes all relevant media files too (e.g., images, PDFs, style sheets).

It has a make clean and easy-to-use interface—you lot literally paste in the website URL and press Enter.

I bully characteristic is the ability to save the download to a file, and then utilise that file to download the aforementioned files and structure again in the future (or on another motorcar). This feature is also what allows SiteSucker to suspension and resume downloads.

SiteSucker costs nigh $5 and does non come with a complimentary version or a gratis trial, which is its biggest downside. The latest version requires macOS 11 Big Sur or higher. Erstwhile versions of SiteSucker are available for older Mac systems, but some features may be missing.

Download : SiteSucker for iOS | Mac ($iv.99)

iv. Wget

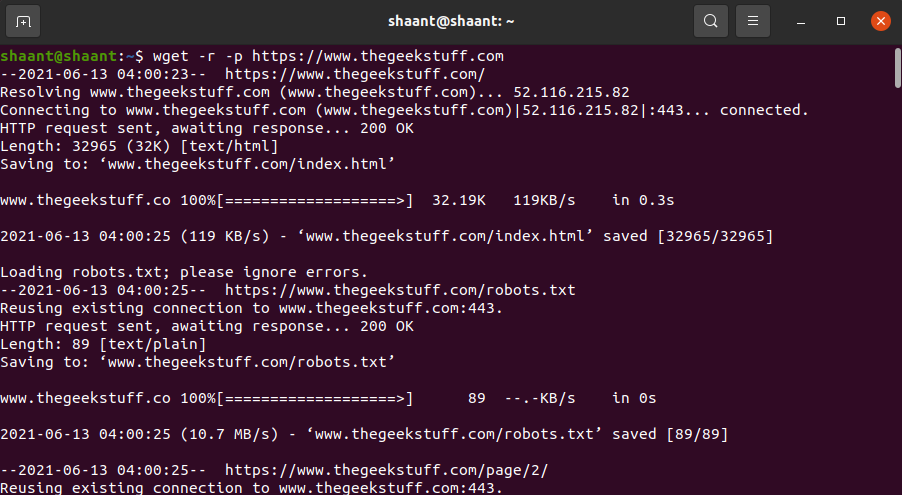

Wget is a command-line utility that can retrieve all kinds of files over the HTTP and FTP protocols. Since websites are served through HTTP and most spider web media files are accessible through HTTP or FTP, this makes Wget an excellent tool for downloading entire websites.

Wget comes bundled with well-nigh Unix-based systems. While Wget is typically used to download single files, information technology can also be used to recursively download all pages and files that are constitute through an initial page:

wget -r -p https://world wide web.makeuseof.com Depending on the size, information technology may have a while for the complete website to exist downloaded.

However, some sites may detect and prevent what you lot're trying to exercise because ripping a website tin can cost them a lot of bandwidth. To get around this, y'all tin can disguise yourself as a web browser with a user agent string:

wget -r -p -U Mozilla https://world wide web.thegeekstuff.com If y'all want to be polite, you should also limit your download speed (so y'all don't pig the web server's bandwidth) and pause between each download (then yous don't overwhelm the spider web server with too many requests):

wget -r -p -U Mozilla --look=x --limit-rate=35K https://www.thegeekstuff.com How to Use Wget on a Mac

On a Mac, y'all can install Wget using a single Homebrew control: brew install wget.

- If you don't already have Homebrew installed, download it with this command:

/usr/bin/ruby -e "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/chief/install)" - Next, install Wget with this command:

brew install wget - After the Wget installation is finished, you can download the website with this control:

wget path/to/local.copy http://www.brainpickings.org/

On Windows, you'll demand to use this ported version instead. Download and install the app, and follow the instructions to complete the site download.

Easily Download Unabridged Websites

Now that you lot know how to download an entire website, you should never exist caught without something to read, even when you take no internet admission. But remember: the bigger the site, the bigger the download. Nosotros don't recommend downloading massive sites like MUO considering y'all'll demand thousands of MBs to shop all the media files we use.

About The AuthorDOWNLOAD HERE

Posted by: liuuposee1952.blogspot.com

Post a Comment